A teenager scrolls through their phone late at night. One video becomes five, then twenty. A recommendation turns into a rabbit hole. Somewhere between curiosity and compulsion, the line blurs. It feels like choice—but it isn’t entirely.

This is the world of Algorithms & Society, where invisible systems quietly shape what we see, what we buy, who we trust, and sometimes even what we believe.

The Invisible Architecture of Algorithms & Society

At its simplest, an algorithm is just a set of rules. But scale changes everything. When those rules are embedded into platforms used by billions, they stop being technical tools and start becoming social forces.

Search engines decide what information appears credible. Streaming platforms decide what stories get visibility. Social media feeds decide what emotions are amplified. Over time, these decisions don’t just reflect society—they begin to influence it.

The shift is subtle but profound. Instead of actively seeking information, people increasingly receive curated realities.

From Neutral Code to Cultural Power

Algorithms were once seen as neutral—cold, mathematical, objective. But neutrality fades when human behavior enters the equation.

Consider how platforms optimize for engagement. Content that triggers strong reactions—anger, excitement, outrage—tends to perform better. Algorithms learn this quickly. The result? A digital environment that prioritizes emotional intensity over nuance.

This is where Algorithms & Society intersect with psychology. Human attention becomes the currency, and algorithms become the traders.

It’s not that algorithms are designed to divide people. But when division keeps users engaged, the system adapts.

Why This Conversation Is Trending Now

A decade ago, algorithms were a niche topic. Today, they sit at the center of debates around democracy, business, and personal freedom.

Several shifts explain why:

- The rise of AI-driven systems that make increasingly complex decisions

- Growing awareness of algorithmic bias in hiring, lending, and policing

- The economic power of data-driven companies

- The realization that digital platforms influence real-world outcomes

When elections, job opportunities, and financial access can be influenced by automated systems, the stakes change dramatically.

Algorithms are no longer just backend tools. They are frontline actors in society.

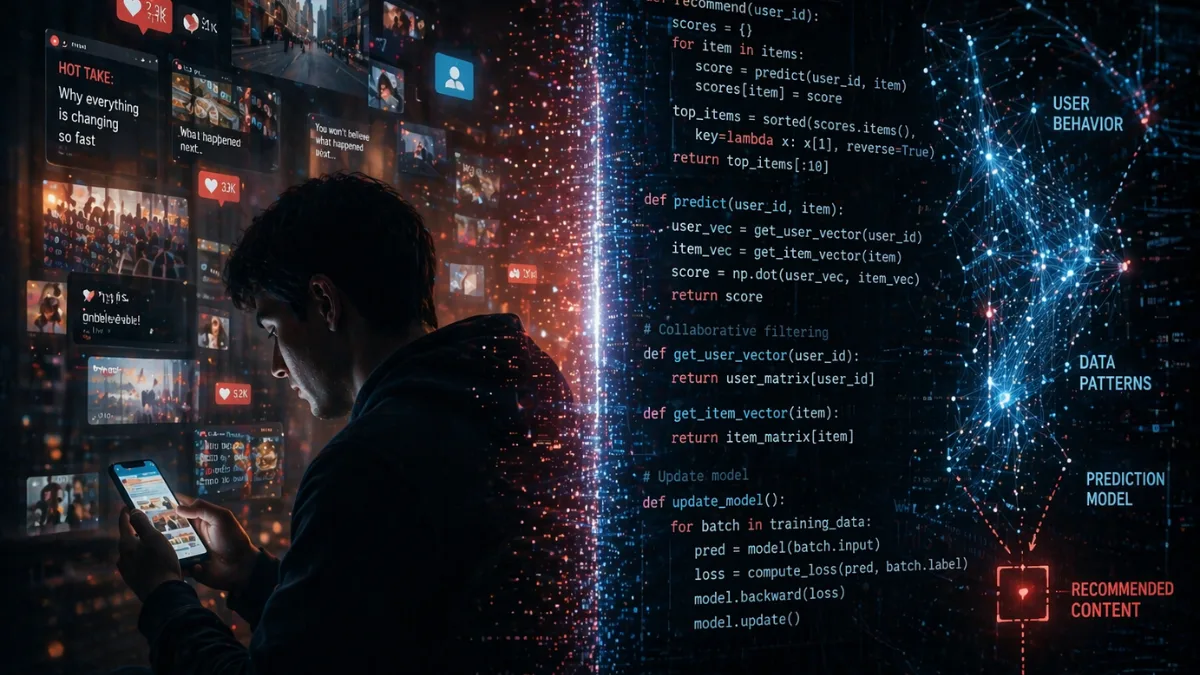

The Business of Predicting You

Behind every recommendation lies a business model. Algorithms don’t operate in isolation—they are designed to serve specific goals.

For most platforms, that goal is simple: keep users engaged.

The more time you spend, the more data you generate. The more data you generate, the better the system predicts your behavior. And the better it predicts, the more effectively it can influence your next action.

This feedback loop is powerful.

It explains why you might see ads for something you only thought about. Or why your feed seems to “understand” you better over time. It’s not intuition—it’s pattern recognition at scale.

In Algorithms & Society, prediction becomes persuasion.

When Personalization Becomes Limitation

Personalization sounds appealing. Who wouldn’t want a tailored experience?

But there’s a hidden trade-off. The more algorithms learn about you, the more they filter your world accordingly.

You see more of what you already like. You encounter fewer opposing perspectives. Over time, this creates what many call a “filter bubble.”

The danger isn’t just misinformation—it’s narrowing perception.

A society shaped by algorithms risks becoming fragmented, with individuals living in parallel realities, each curated by data.

Bias in the System: A Human Problem in Machine Form

Algorithms learn from data. And data reflects human history—including its biases.

When past hiring decisions favored certain groups, hiring algorithms can replicate that pattern. When historical policing data is uneven, predictive systems can reinforce those imbalances.

This is one of the most critical challenges within Algorithms & Society: machines don’t eliminate bias—they can scale it.

Fixing this isn’t just a technical problem. It’s a social one. It requires rethinking what fairness looks like in a system designed for efficiency.

The Illusion of Control

There’s a common belief that users are in control. After all, we choose what to click, watch, and share.

But control in algorithmic environments is layered.

Yes, users make choices. But those choices are shaped by what is presented to them. And what is presented is determined by systems optimized for specific outcomes.

It’s a bit like walking into a store where the layout changes based on your past behavior. You still choose what to buy—but the environment nudges you in certain directions.

In Algorithms & Society, influence doesn’t feel like force. It feels like convenience.

The Social Consequences We’re Still Understanding

The effects of algorithms extend beyond individual behavior.

- Political polarization: Amplified by engagement-driven content

- Mental health concerns: Linked to comparison-driven feeds

- Economic inequality: Influenced by algorithmic access to opportunities

- Cultural shifts: Driven by viral trends and visibility cycles

These aren’t isolated issues. They are interconnected outcomes of systems designed to optimize attention and efficiency.

The challenge is that these systems evolve faster than our ability to regulate or fully understand them.

Where Regulation Meets Innovation

Governments around the world are beginning to respond. Discussions around transparency, accountability, and data rights are gaining traction.

But regulation is complicated.

Too much control can stifle innovation. Too little can allow harmful practices to scale unchecked.

The question isn’t whether algorithms should exist—they are essential. The real question is how they should be governed.

Who decides the rules that shape the rules?

The Future of Algorithms & Society

Looking ahead, algorithms will only become more embedded in daily life.

From healthcare diagnostics to financial decisions, from education to urban planning—automated systems will play a larger role in shaping outcomes.

The next phase won’t just be about recommendation engines. It will be about decision-making systems.

And that raises deeper questions:

- Should algorithms be explainable?

- Who is accountable when they fail?

- How do we balance efficiency with fairness?

These aren’t technical questions. They are societal ones.

Conclusion

Algorithms don’t have intentions. But they have impact. And that impact is shaped by the goals we assign to them.

Algorithms & Society is not just about technology—it’s about power, responsibility, and the future of human decision-making.

We are building systems that learn from us, adapt to us, and increasingly guide us. The question is no longer whether algorithms influence society.

It’s whether society is ready to influence its algorithms.

Final Insight

At The Vue Times, we don’t just report trends—we analyze the systems shaping them. As algorithms continue to redefine modern life, staying informed is no longer optional. It’s essential.

Frequently Asked Questions

What is Algorithms & Society?

→ It refers to how algorithms influence social behavior, decisions, and systems, from online content to real-world outcomes like jobs and finance.

How do algorithms affect daily life?

→ They shape what you see online, recommend products, influence news exposure, and even affect decisions in areas like hiring and credit scoring.

Are algorithms biased?

→ Algorithms can reflect biases present in their training data. If not carefully designed, they may reinforce existing inequalities.

Why are algorithms important today?

→ They power major digital platforms and increasingly impact critical decisions, making them central to discussions on ethics and governance.

Can algorithms be controlled or regulated?

→ Yes, through policies focusing on transparency, fairness, and accountability, though balancing regulation and innovation remains a challenge.